Model Context Protocol (MCP) finally gives AI models a way to access the business data needed to make them really useful at work. CData MCP Servers have the depth and performance to make sure AI has access to all of the answers.

Try them now for free →Integrate Amazon S3 Data in Pentaho Data Integration

Build ETL pipelines based on Amazon S3 data in the Pentaho Data Integration tool.

The CData JDBC Driver for Amazon S3 enables access to live data from data pipelines. Pentaho Data Integration is an Extraction, Transformation, and Loading (ETL) engine that data, cleanses the data, and stores data using a uniform format that is accessible.This article shows how to connect to Amazon S3 data as a JDBC data source and build jobs and transformations based on Amazon S3 data in Pentaho Data Integration.

Configure to Amazon S3 Connectivity

To authorize Amazon S3 requests, provide the credentials for an administrator account or for an IAM user with custom permissions. Set AccessKey to the access key Id. Set SecretKey to the secret access key.

Note: You can connect as the AWS account administrator, but it is recommended to use IAM user credentials to access AWS services.

For information on obtaining the credentials and other authentication methods, refer to the Getting Started section of the Help documentation.

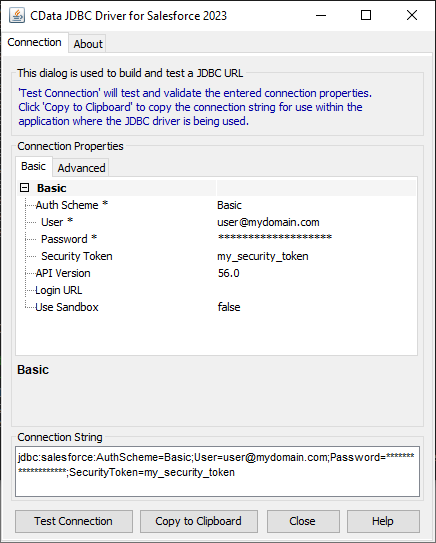

Built-in Connection String Designer

For assistance in constructing the JDBC URL, use the connection string designer built into the Amazon S3 JDBC Driver. Either double-click the JAR file or execute the jar file from the command-line.

java -jar cdata.jdbc.amazons3.jar

Fill in the connection properties and copy the connection string to the clipboard.

When you configure the JDBC URL, you may also want to set the Max Rows connection property. This will limit the number of rows returned, which is especially helpful for improving performance when designing reports and visualizations.

Below is a typical JDBC URL:

jdbc:amazons3:AccessKey=a123;SecretKey=s123;

Save your connection string for use in Pentaho Data Integration.

Connect to Amazon S3 from Pentaho DI

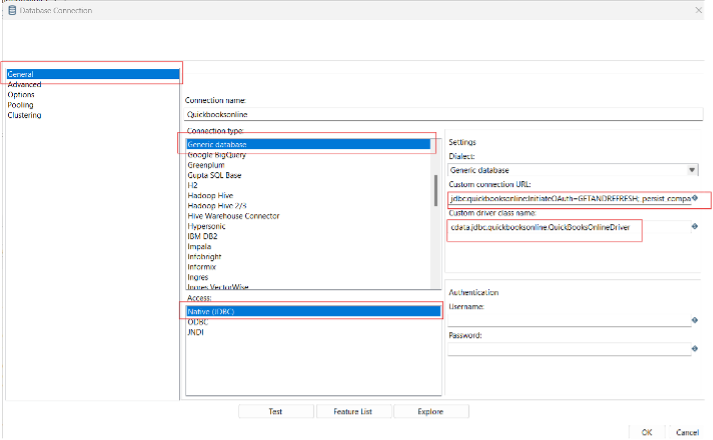

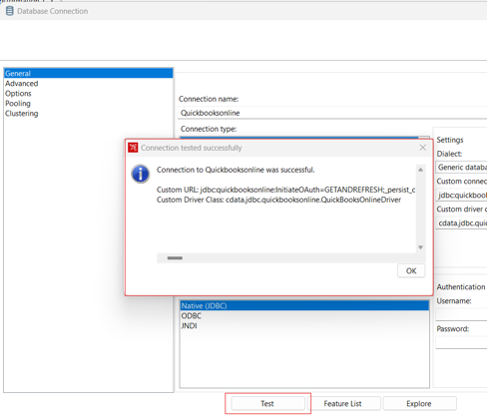

Open Pentaho Data Integration and select "Database Connection" to configure a connection to the CData JDBC Driver for Amazon S3

- Click "General"

- Set Connection name (e.g. Amazon S3 Connection)

- Set Connection type to "Generic database"

- Set Access to "Native (JDBC)"

- Set Custom connection URL to your Amazon S3 connection string (e.g.

jdbc:amazons3:AccessKey=a123;SecretKey=s123; - Set Custom driver class name to "cdata.jdbc.amazons3.AmazonS3Driver"

- Test the connection and click "OK" to save.

Create a Data Pipeline for Amazon S3

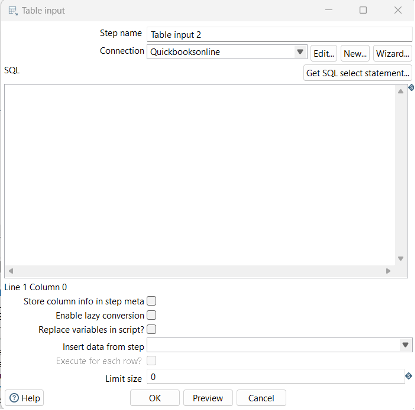

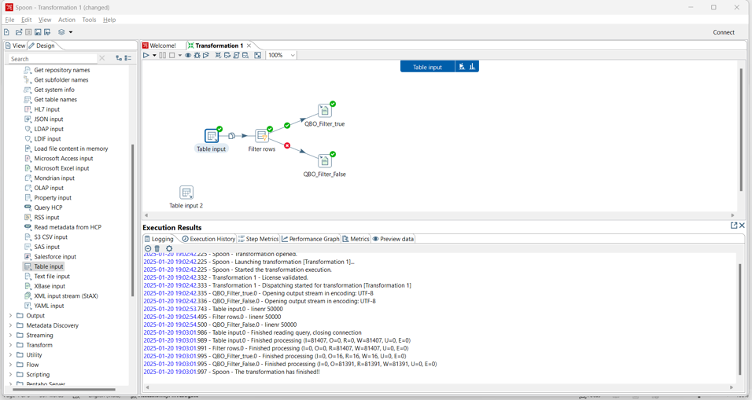

Once the connection to Amazon S3 is configured using the CData JDBC Driver, you are ready to create a new transformation or job.

- Click "File" >> "New" >> "Transformation/job"

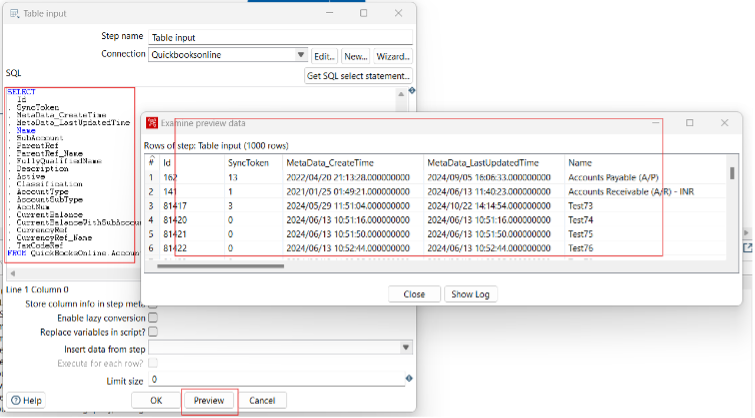

- Drag a "Table input" object into the workflow panel and select your Amazon S3 connection.

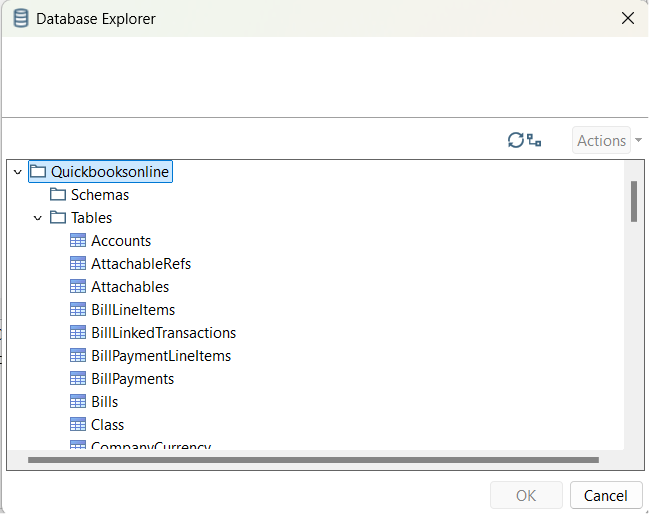

- Click "Get SQL select statement" and use the Database Explorer to view the available tables and views.

- Select a table and optionally preview the data for verification.

At this point, you can continue your transformation or jb by selecting a suitable destination and adding any transformations to modify, filter, or otherwise alter the data during replication.

Free Trial & More Information

Download a free, 30-day trial of the CData JDBC Driver for Amazon S3 and start working with your live Amazon S3 data in Pentaho Data Integration today.