Model Context Protocol (MCP) finally gives AI models a way to access the business data needed to make them really useful at work. CData MCP Servers have the depth and performance to make sure AI has access to all of the answers.

Try them now for free →Explore Geographical Relationships in Azure Data Lake Storage Data with Power Map

Create data visualizations with Azure Data Lake Storage data in Power Map.

The CData ODBC Driver for Azure Data Lake Storage is easy to set up and use with self-service analytics solutions like Power BI: Microsoft Excel provides built-in support for the ODBC standard. This article shows how to load the current Azure Data Lake Storage data into Excel and start generating location-based insights on Azure Data Lake Storage data in Power Map.

Create an ODBC Data Source for Azure Data Lake Storage

If you have not already, first specify connection properties in an ODBC DSN (data source name). This is the last step of the driver installation. You can use the Microsoft ODBC Data Source Administrator to create and configure ODBC DSNs.

Authenticating to a Gen 1 DataLakeStore Account

Gen 1 uses OAuth 2.0 in Azure AD for authentication.

For this, an Active Directory web application is required. You can create one as follows:

To authenticate against a Gen 1 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen1.

- Account: Set this to the name of the account.

- OAuthClientId: Set this to the application Id of the app you created.

- OAuthClientSecret: Set this to the key generated for the app you created.

- TenantId: Set this to the tenant Id. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

Authenticating to a Gen 2 DataLakeStore Account

To authenticate against a Gen 2 DataLakeStore account, the following properties are required:

- Schema: Set this to ADLSGen2.

- Account: Set this to the name of the account.

- FileSystem: Set this to the file system which will be used for this account.

- AccessKey: Set this to the access key which will be used to authenticate the calls to the API. See the property for more information on how to acquire this.

- Directory: Set this to the path which will be used to store the replicated file. If not specified, the root directory will be used.

When you configure the DSN, you may also want to set the Max Rows connection property. This will limit the number of rows returned, which is especially helpful for improving performance when designing reports and visualizations.

When you configure the DSN, you may also want to set the Max Rows connection property. This will limit the number of rows returned, which is especially helpful for improving performance when designing reports and visualizations.

Import Azure Data Lake Storage Data into Excel

You can import data into Power Map either from an Excel spreadsheet or from Power Pivot. For a step-by-step guide to use either method to import Azure Data Lake Storage data, see the "Using the ODBC Driver" section in the help documentation.

Geocode Azure Data Lake Storage Data

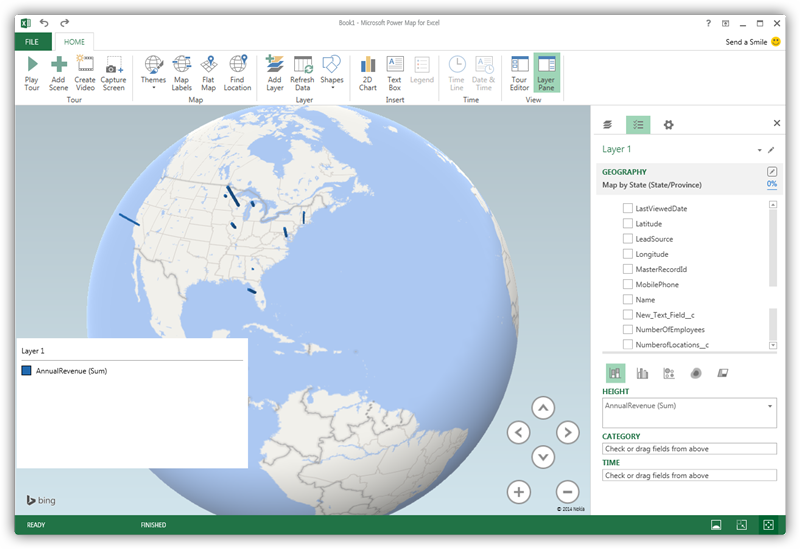

After importing the Azure Data Lake Storage data into an Excel spreadsheet or into PowerPivot, you can drag and drop Azure Data Lake Storage entities in Power Map. To open Power Map, click any cell in the spreadsheet and click Insert -> Map.

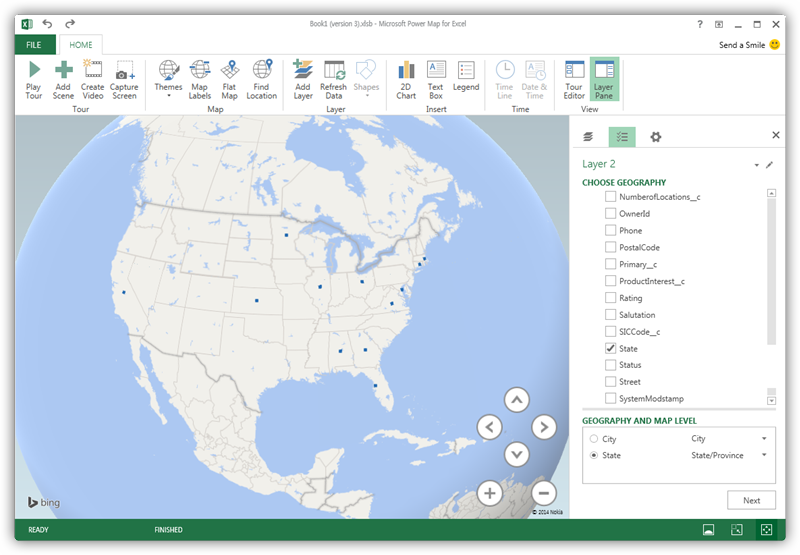

In the Choose Geography menu, Power Map detects the columns that have geographic information. In the Geography and Map Level menu in the Layer Pane, you can select the columns you want to work with. Power Map then plots the data. A dot represents a record that has this value. When you have selected the geographic columns you want, click Next.

Select Measures and Categories

You can then simply select columns: Measures and categories are automatically detected. The available chart types are Stacked Column, Clustered Column, Bubble, Heat Map, and Region.