Model Context Protocol (MCP) finally gives AI models a way to access the business data needed to make them really useful at work. CData MCP Servers have the depth and performance to make sure AI has access to all of the answers.

Try them now for free →Connect to Databricks Data in CloverDX (formerly CloverETL)

Transfer Databricks data using the visual workflow in the CloverDX data integration tool.

The CData JDBC Driver for Databricks enables you to use the data transformation components in CloverDX (formerly CloverETL) to work with Databricks as sources and destinations. In this article, you will use the JDBC Driver for Databricks to set up a simple transfer into a flat file. The CData JDBC Driver for Databricks enables you to use the data transformation components in CloverDX (formerly CloverETL) to work with Databricks as sources and destinations. In this article, you will use the JDBC Driver for Databricks to set up a simple transfer into a flat file.

About Databricks Data Integration

Accessing and integrating live data from Databricks has never been easier with CData. Customers rely on CData connectivity to:

- Access all versions of Databricks from Runtime Versions 9.1 - 13.X to both the Pro and Classic Databricks SQL versions.

- Leave Databricks in their preferred environment thanks to compatibility with any hosting solution.

- Secure authenticate in a variety of ways, including personal access token, Azure Service Principal, and Azure AD.

- Upload data to Databricks using Databricks File System, Azure Blog Storage, and AWS S3 Storage.

While many customers are using CData's solutions to migrate data from different systems into their Databricks data lakehouse, several customers use our live connectivity solutions to federate connectivity between their databases and Databricks. These customers are using SQL Server Linked Servers or Polybase to get live access to Databricks from within their existing RDBMs.

Read more about common Databricks use-cases and how CData's solutions help solve data problems in our blog: What is Databricks Used For? 6 Use Cases.

Getting Started

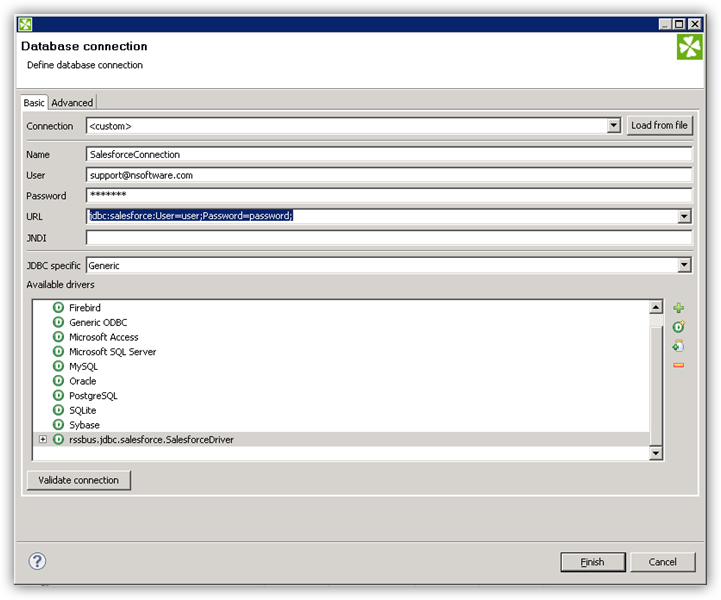

Connect to Databricks as a JDBC Data Source

- Create the connection to Databricks data. In a new CloverDX graph, right-click the Connections node in the Outline pane and click Connections -> Create Connection. The Database Connection wizard is displayed.

- Click the plus icon to load a driver from a JAR. Browse to the lib subfolder of the installation directory and select the cdata.jdbc.databricks.jar file.

- Enter the JDBC URL.

To connect to a Databricks cluster, set the properties as described below.

Note: The needed values can be found in your Databricks instance by navigating to Clusters, and selecting the desired cluster, and selecting the JDBC/ODBC tab under Advanced Options.

- Server: Set to the Server Hostname of your Databricks cluster.

- HTTPPath: Set to the HTTP Path of your Databricks cluster.

- Token: Set to your personal access token (this value can be obtained by navigating to the User Settings page of your Databricks instance and selecting the Access Tokens tab).

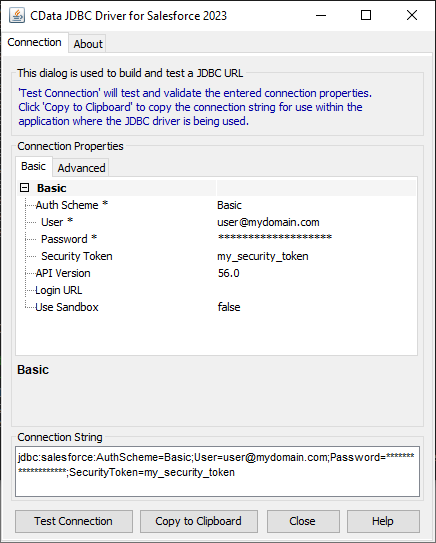

Built-in Connection String Designer

For assistance in constructing the JDBC URL, use the connection string designer built into the Databricks JDBC Driver. Either double-click the JAR file or execute the jar file from the command-line.

java -jar cdata.jdbc.databricks.jarFill in the connection properties and copy the connection string to the clipboard.

![Using the built-in connection string designer to generate a JDBC URL (Salesforce is shown.)]()

A typical JDBC URL is below:

jdbc:databricks:Server=127.0.0.1;Port=443;TransportMode=HTTP;HTTPPath=MyHTTPPath;UseSSL=True;User=MyUser;Password=MyPassword;

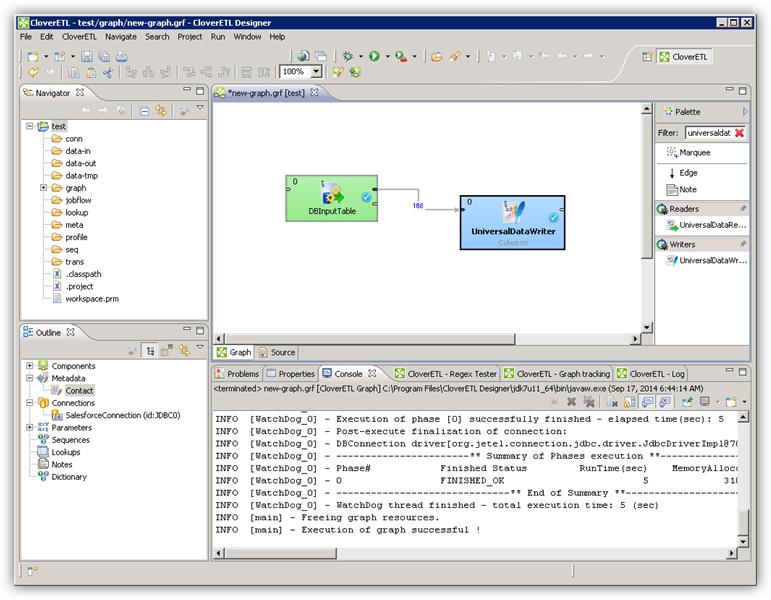

Query Databricks Data with the DBInputTable Component

- Drag a DBInputTable from the Readers selection of the Palette onto the job flow and double-click it to open the configuration editor.

- In the DB connection property, select the Databricks JDBC data source from the drop-down menu.

- Enter the SQL query. For example:

SELECT City, CompanyName FROM Customers WHERE Country = 'US'

Write the Output of the Query to a UniversalDataWriter

- Drag a UniversalDataWriter from the Writers selection onto the jobflow.

- Double-click the UniversalDataWriter to open the configuration editor and add a file URL.

- Right-click the DBInputTable and then click Extract Metadata.

- Connect the output port of the DBInputTable to the UniversalDataWriter.

- In the resulting Select Metadata menu for the UniversalDataWriter, choose the Customers table. (You can also open this menu by right-clicking the input port for the UniversalDataWriter.)

- Click Run to write to the file.