Model Context Protocol (MCP) finally gives AI models a way to access the business data needed to make them really useful at work. CData MCP Servers have the depth and performance to make sure AI has access to all of the answers.

Try them now for free →Consume Databricks OData Feeds in SAP Lumira

Use the API Server to create data visualizations on Databricks feeds that reflect any changes in SAP Lumira.

You can use the CData API Server and the ADO.NET Provider for Databricks (or any of 200+ other ADO.NET Providers) to create data visualizations based on Databricks data in SAP Lumira. The API Server enables connectivity to live data: dashboards and reports can be refreshed on demand. This article shows how to create a chart that is always up to date.

About Databricks Data Integration

Accessing and integrating live data from Databricks has never been easier with CData. Customers rely on CData connectivity to:

- Access all versions of Databricks from Runtime Versions 9.1 - 13.X to both the Pro and Classic Databricks SQL versions.

- Leave Databricks in their preferred environment thanks to compatibility with any hosting solution.

- Secure authenticate in a variety of ways, including personal access token, Azure Service Principal, and Azure AD.

- Upload data to Databricks using Databricks File System, Azure Blog Storage, and AWS S3 Storage.

While many customers are using CData's solutions to migrate data from different systems into their Databricks data lakehouse, several customers use our live connectivity solutions to federate connectivity between their databases and Databricks. These customers are using SQL Server Linked Servers or Polybase to get live access to Databricks from within their existing RDBMs.

Read more about common Databricks use-cases and how CData's solutions help solve data problems in our blog: What is Databricks Used For? 6 Use Cases.

Getting Started

Set Up the API Server

Follow the steps below to begin producing secure Databricks OData services:

Deploy

The API Server runs on your own server. On Windows, you can deploy using the stand-alone server or IIS. On a Java servlet container, drop in the API Server WAR file. See the help documentation for more information and how-tos.

The API Server is also easy to deploy on Microsoft Azure, Amazon EC2, and Heroku.

Connect to Databricks

After you deploy the API Server and the ADO.NET Provider for Databricks, provide authentication values and other connection properties needed to connect to Databricks by clicking Settings -> Connection and adding a new connection in the API Server administration console.

To connect to a Databricks cluster, set the properties as described below.

Note: The needed values can be found in your Databricks instance by navigating to Clusters, and selecting the desired cluster, and selecting the JDBC/ODBC tab under Advanced Options.

- Server: Set to the Server Hostname of your Databricks cluster.

- HTTPPath: Set to the HTTP Path of your Databricks cluster.

- Token: Set to your personal access token (this value can be obtained by navigating to the User Settings page of your Databricks instance and selecting the Access Tokens tab).

When you configure the connection, you may also want to set the Max Rows connection property. This will limit the number of rows returned, which is especially helpful for improving performance when designing reports and visualizations.

You can then choose the Databricks entities you want to allow the API Server access to by clicking Settings -> Resources.

Authorize API Server Users

After determining the OData services you want to produce, authorize users by clicking Settings -> Users. The API Server uses authtoken-based authentication and supports the major authentication schemes. Access can also be restricted based on IP address; by default, only connections to the local machine are allowed. You can authenticate as well as encrypt connections with SSL.

Connect to Databricks from SAP Lumira

Follow the steps below to retrieve Databricks data into SAP Lumira. You can execute an SQL query or use the UI.

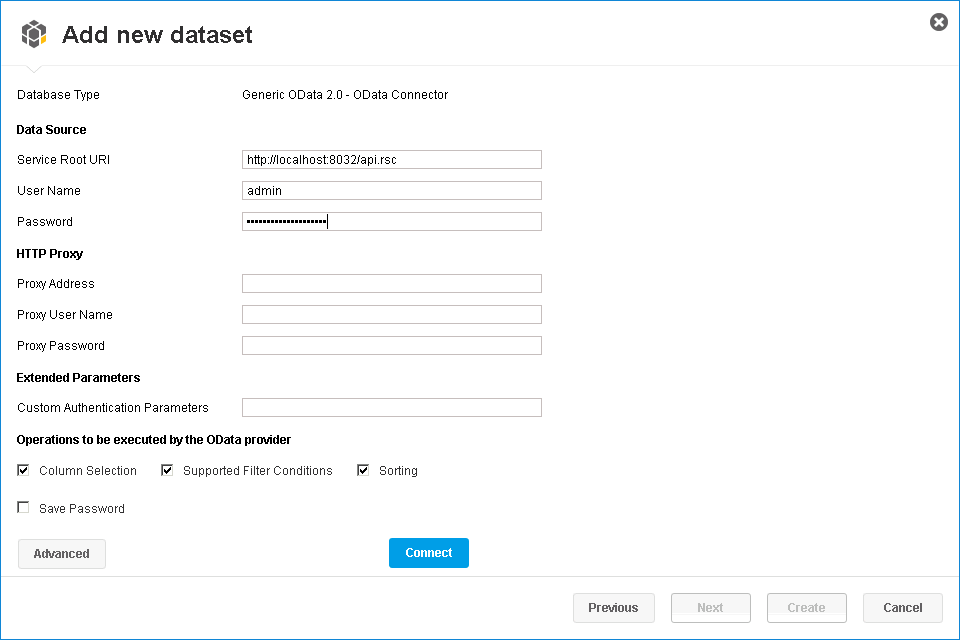

- In SAP Lumira, click File -> New -> Query with SQL. The Add New Dataset dialog is displayed.

- Expand the Generic section and click the Generic OData 2.0 Connector option.

-

In the Service Root URI box, enter the OData endpoint of the API Server. This URL will resemble the following:

https://your-server:8032/api.rsc -

In the User Name and Password boxes, enter the username and authtoken of an API user. These credentials will be used in HTTP Basic authentication.

Select entities in the tree or enter an SQL query. This article imports Databricks Customers entities.

-

When you click Connect, SAP Lumira will generate the corresponding OData request and load the results into memory. You can then use any of the data processing tools available in SAP Lumira, such as filters, aggregates, and summary functions.

Create Data Visualizations

After you have imported the data, you can create data visualizations in the Visualize room. Follow the steps below to create a basic chart.

In the Measures and Dimensions pane, drag measures and dimensions onto the x-axis and y-axis fields in the Visualization Tools pane. SAP Lumira automatically detects dimensions and measures from the metadata service of the API Server.

By default, the SUM function is applied to all measures. Click the gear icon next to a measure to change the default summary.

- In the Visualization Tools pane, select the chart type.

- In the Chart Canvas pane, apply filters, sort by measures, add rankings, and update the chart with the current Databricks data.