Model Context Protocol (MCP) finally gives AI models a way to access the business data needed to make them really useful at work. CData MCP Servers have the depth and performance to make sure AI has access to all of the answers.

Try them now for free →Create an SAP BusinessObjects Universe on the CData ODBC Driver for Databricks

Provide connectivity to Databricks data through an SAP BusinessObjects universe.

This article shows how to create and publish an SAP BusinessObjects universe on the CData ODBC Driver for Databricks. You will connect to Databricks data from the Information Design Tool as well as the Web Intelligence tool.

About Databricks Data Integration

Accessing and integrating live data from Databricks has never been easier with CData. Customers rely on CData connectivity to:

- Access all versions of Databricks from Runtime Versions 9.1 - 13.X to both the Pro and Classic Databricks SQL versions.

- Leave Databricks in their preferred environment thanks to compatibility with any hosting solution.

- Secure authenticate in a variety of ways, including personal access token, Azure Service Principal, and Azure AD.

- Upload data to Databricks using Databricks File System, Azure Blog Storage, and AWS S3 Storage.

While many customers are using CData's solutions to migrate data from different systems into their Databricks data lakehouse, several customers use our live connectivity solutions to federate connectivity between their databases and Databricks. These customers are using SQL Server Linked Servers or Polybase to get live access to Databricks from within their existing RDBMs.

Read more about common Databricks use-cases and how CData's solutions help solve data problems in our blog: What is Databricks Used For? 6 Use Cases.

Getting Started

Connect to Databricks as an ODBC Data Source

If you have not already, first specify connection properties in an ODBC DSN (data source name). This is the last step of the driver installation. You can use the Microsoft ODBC Data Source Administrator to create and configure ODBC DSNs.

To connect to a Databricks cluster, set the properties as described below.

Note: The needed values can be found in your Databricks instance by navigating to Clusters, and selecting the desired cluster, and selecting the JDBC/ODBC tab under Advanced Options.

- Server: Set to the Server Hostname of your Databricks cluster.

- HTTPPath: Set to the HTTP Path of your Databricks cluster.

- Token: Set to your personal access token (this value can be obtained by navigating to the User Settings page of your Databricks instance and selecting the Access Tokens tab).

When you configure the DSN, you may also want to set the Max Rows connection property. This will limit the number of rows returned, which is especially helpful for improving performance when designing reports and visualizations.

Create an ODBC Connection to Databricks Data

This section shows how to create a connection to the Databricks ODBC data source in the Information Design Tool. After you create a connection, you can analyze data or create a BusinessObjects universe.

Right-click your project and click New -> New Relational Connection.

- In the wizard that is displayed, enter a name for the connection.

Select Generic -> Generic ODBC datasource -> ODBC Drivers and select the DSN.

-

Finish the wizard with the default values for connection pooling and custom parameters.

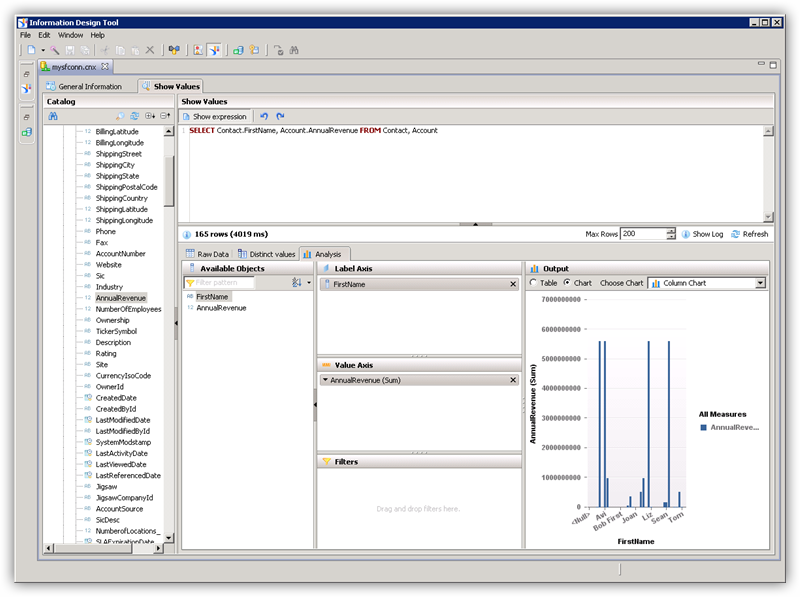

Analyze Databricks Data in the Information Design Tool

In the Information Design Tool, you can use both published and local ODBC connections to browse and query data.

In the Local Projects view, double-click the connection (the .cnx file) to open the Databricks data source.

On the Show Values tab, you can load table data and enter SQL queries. To view table data, expand the node for the table, right-click the table, and click Show Values. Values will be displayed in the Raw Data tab.

On the Analysis tab, you can drag and drop columns onto the axes of a chart.

Publish the Local Connection

To publish the universe to the CMS, you additionally need to publish the connection.

In the Local Projects view, right-click the connection and click Publish Connection to a Repository.

Enter the host and port of the repository and connection credentials.

Select the folder where the connection will be published.

In the success dialog that results, click Yes to create a connection shortcut.

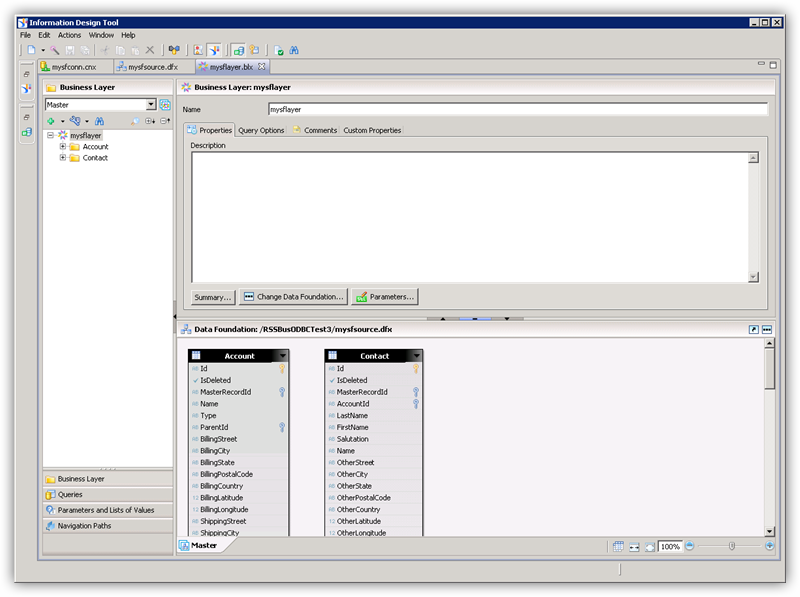

Create a Universe on the ODBC Driver for Databricks

You can follow the steps below to create a universe on the ODBC driver for Databricks. The universe in this example will be published to a repository, so it uses the published connection created in the previous step.

In the Information Design Tool, click File->New Universe.

Select your project.

Select the option to create the universe on a relational data source.

Select the shortcut to the published connection.

Enter a name for the Data Foundation.

Import tables and columns that you want to access as objects.

Enter a name for the Business Layer.

Publish the Universe

You can follow the steps below to publish the universe to the CMS.

In the Local Projects view, right-click the business layer and click Publish -> To a Repository.

In the Publish Universe dialog, enter any integrity checks before importing.

Select or create a folder on the repository where the universe will be published.

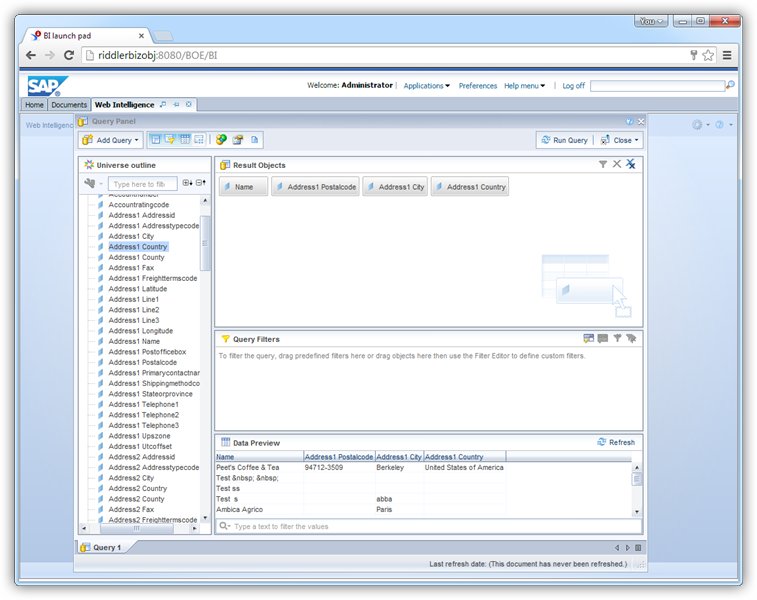

Query Databricks Data in Web Intelligence

You can use the published universe to connect to Databricks in Web Intelligence.

Open Web Intelligence from the BusinessObjects launchpad and create a new document.

Select the Universe option for the data source.

Select the Databricks universe. This opens a Query Panel. Drag objects to the Result Objects pane to use them in the query.