Model Context Protocol (MCP) finally gives AI models a way to access the business data needed to make them really useful at work. CData MCP Servers have the depth and performance to make sure AI has access to all of the answers.

Try them now for free →How to integrate Odoo with Apache Airflow

Access and process Odoo data in Apache Airflow using the CData JDBC Driver.

Apache Airflow supports the creation, scheduling, and monitoring of data engineering workflows. When paired with the CData JDBC Driver for Odoo, Airflow can work with live Odoo data. This article describes how to connect to and query Odoo data from an Apache Airflow instance and store the results in a CSV file.

With built-in optimized data processing, the CData JDBC driver offers unmatched performance for interacting with live Odoo data. When you issue complex SQL queries to Odoo, the driver pushes supported SQL operations, like filters and aggregations, directly to Odoo and utilizes the embedded SQL engine to process unsupported operations client-side (often SQL functions and JOIN operations). Its built-in dynamic metadata querying allows you to work with and analyze Odoo data using native data types.

About Odoo Data Integration

Accessing and integrating live data from Odoo has never been easier with CData. Customers rely on CData connectivity to:

- Access live data from both Odoo API 8.0+ and Odoo.sh Cloud ERP.

-

Extend the native Odoo features with intelligent handling of many-to-one, one-to-many, and many-to-many data properties. CData's connectivity solutions also intelligently handle complex data properties within Odoo. In addition to columns with simple values like text and dates, there are also columns that contain multiple values on each row. The driver decodes these kinds of values differently, depending upon the type of column the value comes from:

- Many-to-one columns are references to a single row within another model. Within CData solutions, many-to-one columns are represented as integers, whose value is the ID to which they refer in the other model.

- Many-to-many columns are references to many rows within another model. Within CData solutions, many-to-many columns are represented as text containing a comma-separated list of integers. Each value in that list is the ID of a row that is being referenced.

- One-to-many columns are references to many rows within another model - they are similar to many-to-many columns (comma-separated lists of integers), except that each row in the referenced model must belong to only one in the main model.

- Use SQL stored procedures to call server-side RFCs within Odoo.

Users frequently integrate Odoo with analytics tools such as Power BI and Qlik Sense, and leverage our tools to replicate Odoo data to databases or data warehouses.

Getting Started

Configuring the Connection to Odoo

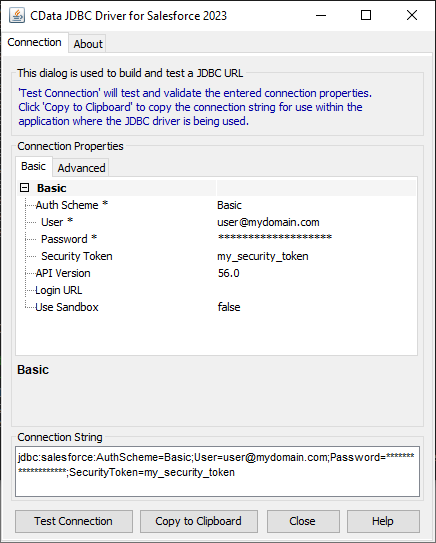

Built-in Connection String Designer

For assistance in constructing the JDBC URL, use the connection string designer built into the Odoo JDBC Driver. Either double-click the JAR file or execute the jar file from the command-line.

java -jar cdata.jdbc.odoo.jar

Fill in the connection properties and copy the connection string to the clipboard.

To connect, set the Url to a valid Odoo site, User and Password to the connection details of the user you are connecting with, and Database to the Odoo database.

To host the JDBC driver in clustered environments or in the cloud, you will need a license (full or trial) and a Runtime Key (RTK). For more information on obtaining this license (or a trial), contact our sales team.

The following are essential properties needed for our JDBC connection.

| Property | Value |

|---|---|

| Database Connection URL | jdbc:odoo:RTK=5246...;User=MyUser;Password=MyPassword;URL=http://MyOdooSite/;Database=MyDatabase; |

| Database Driver Class Name | cdata.jdbc.odoo.OdooDriver |

Establishing a JDBC Connection within Airflow

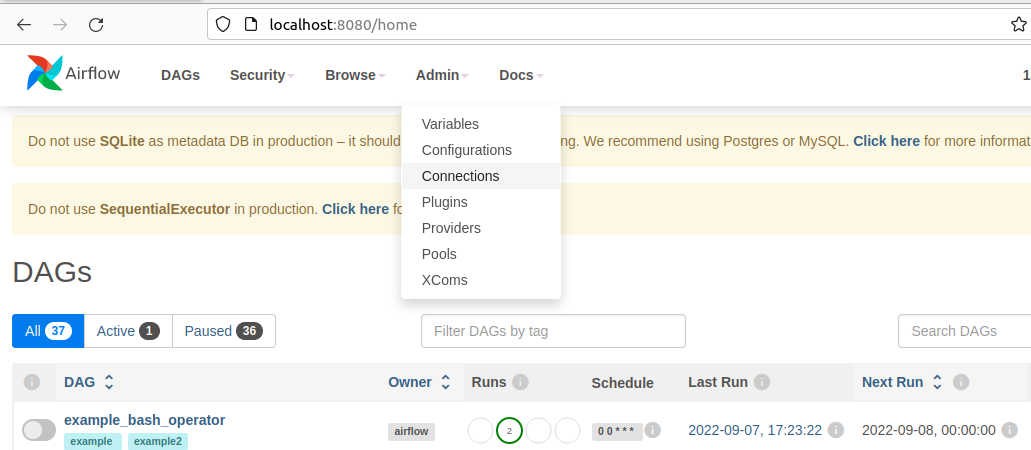

- Log into your Apache Airflow instance.

- On the navbar of your Airflow instance, hover over Admin and then click Connections.

![Clicking connections]()

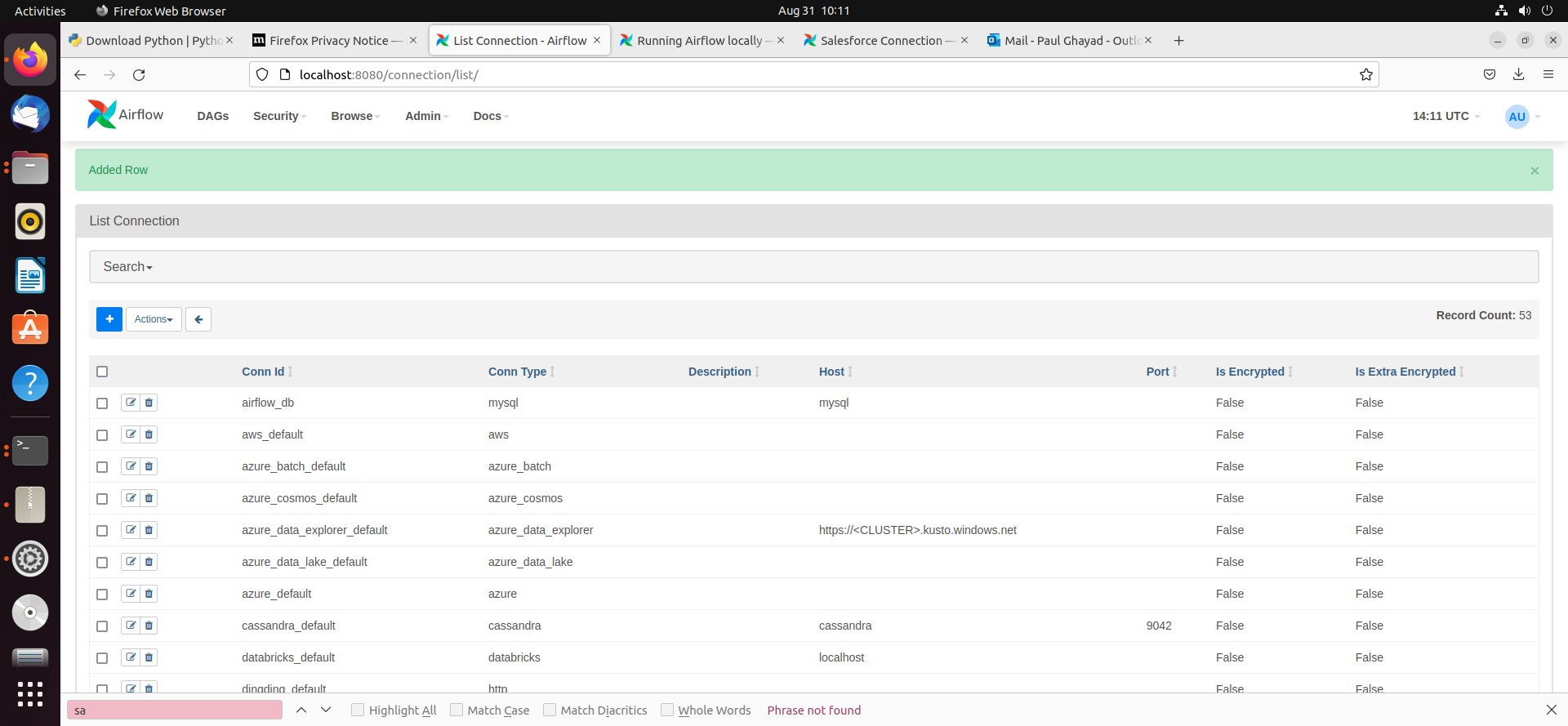

- Next, click the + sign on the following screen to create a new connection.

- In the Add Connection form, fill out the required connection properties:

- Connection Id: Name the connection, i.e.: odoo_jdbc

- Connection Type: JDBC Connection

- Connection URL: The JDBC connection URL from above, i.e.: jdbc:odoo:RTK=5246...;User=MyUser;Password=MyPassword;URL=http://MyOdooSite/;Database=MyDatabase;)

- Driver Class: cdata.jdbc.odoo.OdooDriver

- Driver Path: PATH/TO/cdata.jdbc.odoo.jar

![Add JDBC connection form]()

- Test your new connection by clicking the Test button at the bottom of the form.

- After saving the new connection, on a new screen, you should see a green banner saying that a new row was added to the list of connections:

![New connection added]()

Creating a DAG

A DAG in Airflow is an entity that stores the processes for a workflow and can be triggered to run this workflow. Our workflow is to simply run a SQL query against Odoo data and store the results in a CSV file.

- To get started, in the Home directory, there should be an "airflow" folder. Within there, we can create a new directory and title it "dags". In here, we store Python files that convert into Airflow DAGs shown on the UI.

- Next, create a new Python file and title it odoo_hook.py. Insert the following code inside of this new file:

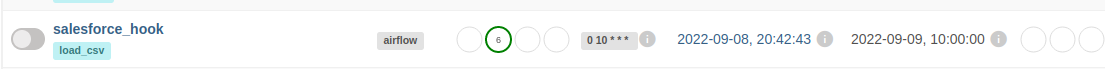

import time from datetime import datetime from airflow.decorators import dag, task from airflow.providers.jdbc.hooks.jdbc import JdbcHook import pandas as pd # Declare Dag @dag(dag_id="odoo_hook", schedule_interval="0 10 * * *", start_date=datetime(2022,2,15), catchup=False, tags=['load_csv']) # Define Dag Function def extract_and_load(): # Define tasks @task() def jdbc_extract(): try: hook = JdbcHook(jdbc_conn_id="jdbc") sql = """ select * from Account """ df = hook.get_pandas_df(sql) df.to_csv("/{some_file_path}/{name_of_csv}.csv",header=False, index=False, quoting=1) # print(df.head()) print(df) tbl_dict = df.to_dict('dict') return tbl_dict except Exception as e: print("Data extract error: " + str(e)) jdbc_extract() sf_extract_and_load = extract_and_load() - Save this file and refresh your Airflow instance. Within the list of DAGs, you should see a new DAG titled "odoo_hook".

![New DAG added]()

- Click on this DAG and, on the new screen, click on the unpause switch to make it turn blue, and then click the trigger (i.e. play) button to run the DAG. This executes the SQL query in our odoo_hook.py file and export the results as a CSV to whichever file path we designated in our code.

![Run the DAG]()

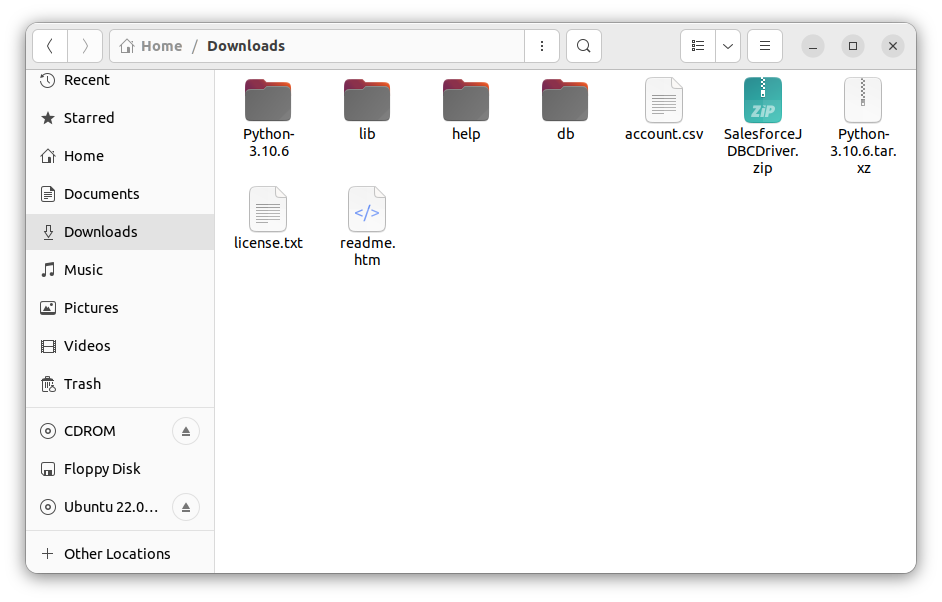

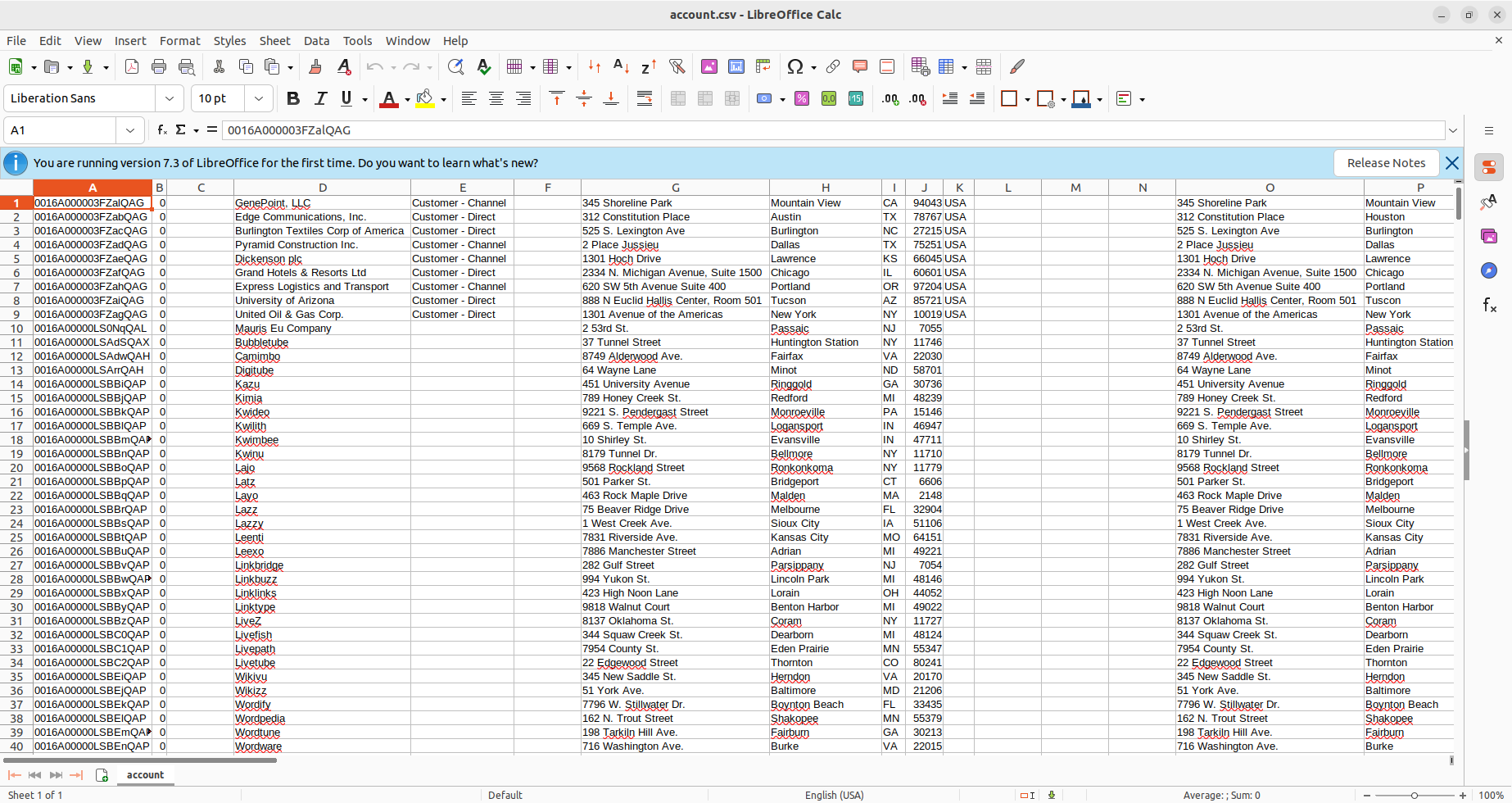

- After triggering our new DAG, we check the Downloads folder (or wherever you chose within your Python script), and see that the CSV file has been created - in this case, account.csv.

![CSV created]()

- Open the CSV file to see that your Odoo data is now available for use in CSV format thanks to Apache Airflow.

![CSV file with Odoo data.]()