Model Context Protocol (MCP) finally gives AI models a way to access the business data needed to make them really useful at work. CData MCP Servers have the depth and performance to make sure AI has access to all of the answers.

Try them now for free →How to Work with Workday Data in AWS Glue Jobs Using JDBC

Connect to Workday from AWS Glue jobs using the CData JDBC Driver hosted in Amazon S3.

AWS Glue is an ETL service from Amazon that allows you to easily prepare and load your data for storage and analytics. Using the PySpark module along with AWS Glue, you can create jobs that work with data over JDBC connectivity, loading the data directly into AWS data stores. In this article, we walk through uploading the CData JDBC Driver for Workday into an Amazon S3 bucket and creating and running an AWS Glue job to extract Workday data and store it in S3 as a CSV file.

About Workday Data Integration

CData provides the easiest way to access and integrate live data from Workday. Customers use CData connectivity to:

- Access the tables and datasets you create in Prism Analytics Data Catalog, working with the native Workday data hub without compromising the fidelity of your Workday system.

- Access Workday Reports-as-a-Service to surface data from departmental datasets not available from Prism and datasets larger than Prism allows.

- Access base data objects with WQL, REST, or SOAP, getting more granular, detailed access but with the potential need for Workday admins or IT to help craft queries.

Users frequently integrate Workday with analytics tools such as Tableau, Power BI, and Excel, and leverage our tools to replicate Workday data to databases or data warehouses. Access is secured at the user level, based on the authenticated user's identity and role.

For more information on configuring Workday to work with CData, refer to our Knowledge Base articles: Comprehensive Workday Connectivity through Workday WQL and Reports-as-a-Service & Workday + CData: Connection & Integration Best Practices.

Getting Started

Upload the CData JDBC Driver for Workday to an Amazon S3 Bucket

In order to work with the CData JDBC Driver for Workday in AWS Glue, you will need to store it (and any relevant license files) in an Amazon S3 bucket.

- Open the Amazon S3 Console.

- Select an existing bucket (or create a new one).

- Click Upload

- Select the JAR file (cdata.jdbc.workday.jar) found in the lib directory in the installation location for the driver.

Configure the Amazon Glue Job

- Navigate to ETL -> Jobs from the AWS Glue Console.

- Click Add Job to create a new Glue job.

- Fill in the Job properties:

- Name: Fill in a name for the job, for example: WorkdayGlueJob.

- IAM Role: Select (or create) an IAM role that has the AWSGlueServiceRole and AmazonS3FullAccess permissions policies. The latter policy is necessary to access both the JDBC Driver and the output destination in Amazon S3.

- Type: Select "Spark".

- Glue Version: Select "Spark 2.4, Python 3 (Glue Version 1.0)".

- This job runs: Select "A new script to be authored by you".

Populate the script properties: - Script file name: A name for the script file, for example: GlueWorkdayJDBC

- S3 path where the script is stored: Fill in or browse to an S3 bucket.

- Temporary directory: Fill in or browse to an S3 bucket.

- Expand Security configuration, script libraries and job parameters (optional). For Dependent jars path, fill in or browse to the S3 bucket where you uploaded the JAR file. Be sure to include the name of the JAR file itself in the path, i.e.: s3://mybucket/cdata.jdbc.workday.jar

- Click Next. Here you will have the option to add connection to other AWS endpoints. So, if your Destination is Redshift, MySQL, etc, you can create and use connections to those data sources.

- Click "Save job and edit script" to create the job.

- In the editor that opens, write a python script for the job. You can use the sample script (see below) as an example.

Sample Glue Script

To connect to Workday using the CData JDBC driver, you will need to create a JDBC URL, populating the necessary connection properties. Additionally, you will need to set the RTK property in the JDBC URL (unless you are using a Beta driver). You can view the licensing file included in the installation for information on how to set this property.

To connect to Workday, users need to find the Tenant and BaseURL and then select their API type.

Obtaining the BaseURL and Tenant

To obtain the BaseURL and Tenant properties, log into Workday and search for "View API Clients." On this screen, you'll find the Workday REST API Endpoint, a URL that includes both the BaseURL and Tenant.

The format of the REST API Endpoint is: https://domain.com/subdirectories/mycompany, where:

- https://domain.com/subdirectories/ is the BaseURL.

- mycompany (the portion of the url after the very last slash) is the Tenant.

Using ConnectionType to Select the API

The value you use for the ConnectionType property determines which Workday API you use. See our Community Article for more information on Workday connectivity options and best practices.

| API | ConnectionType Value |

|---|---|

| WQL | WQL |

| Reports as a Service | Reports |

| REST | REST |

| SOAP | SOAP |

Authentication

Your method of authentication depends on which API you are using.

- WQL, Reports as a Service, REST: Use OAuth authentication.

- SOAP: Use Basic or OAuth authentication.

See the Help documentation for more information on configuring OAuth with Workday.

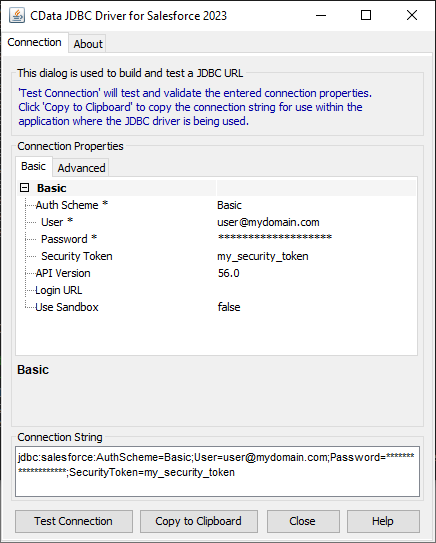

Built-in Connection String Designer

For assistance in constructing the JDBC URL, use the connection string designer built into the Workday JDBC Driver. Either double-click the JAR file or execute the JAR file from the command-line.

java -jar cdata.jdbc.workday.jar

Fill in the connection properties and copy the connection string to the clipboard.

To host the JDBC driver in Amazon S3, you will need a license (full or trial) and a Runtime Key (RTK). For more information on obtaining this license (or a trial), contact our sales team.

Below is a sample script that uses the CData JDBC driver with the PySpark and AWSGlue modules to extract Workday data and write it to an S3 bucket in CSV format. Make any necessary changes to the script to suit your needs and save the job.

import sys

from awsglue.transforms import *

from awsglue.utils import getResolvedOptions

from pyspark.context import SparkContext

from awsglue.context import GlueContext

from awsglue.dynamicframe import DynamicFrame

from awsglue.job import Job

args = getResolvedOptions(sys.argv, ['JOB_NAME'])

sparkContext = SparkContext()

glueContext = GlueContext(sparkContext)

sparkSession = glueContext.spark_session

##Use the CData JDBC driver to read Workday data from the Workers table into a DataFrame

##Note the populated JDBC URL and driver class name

source_df = sparkSession.read.format("jdbc").option("url","jdbc:workday:RTK=5246...;User=myuser;Password=mypassword;Tenant=mycompany_gm1;BaseURL=https://wd3-impl-services1.workday.com;ConnectionType=WQL;").option("dbtable","Workers").option("driver","cdata.jdbc.workday.WorkdayDriver").load()

glueJob = Job(glueContext)

glueJob.init(args['JOB_NAME'], args)

##Convert DataFrames to AWS Glue's DynamicFrames Object

dynamic_dframe = DynamicFrame.fromDF(source_df, glueContext, "dynamic_df")

##Write the DynamicFrame as a file in CSV format to a folder in an S3 bucket.

##It is possible to write to any Amazon data store (SQL Server, Redshift, etc) by using any previously defined connections.

retDatasink4 = glueContext.write_dynamic_frame.from_options(frame = dynamic_dframe, connection_type = "s3", connection_options = {"path": "s3://mybucket/outfiles"}, format = "csv", transformation_ctx = "datasink4")

glueJob.commit()

Run the Glue Job

With the script written, we are ready to run the Glue job. Click Run Job and wait for the extract/load to complete. You can view the status of the job from the Jobs page in the AWS Glue Console. Once the Job has succeeded, you will have a CSV file in your S3 bucket with data from the Workday Workers table.

Using the CData JDBC Driver for Workday in AWS Glue, you can easily create ETL jobs for Workday data, whether writing the data to an S3 bucket or loading it into any other AWS data store.